Digital text, at the basis of all computer-mediated epistemological activity, appears to view at once an ephemeral and enduring phenomenon. It is, as Wendy Hui Kyong Chun wrote, an “enduringly ephemeral” inscription that “create[es] unforeseen degenerative links between humans and machines.”1 At the site of its projection, on “soft” screens, the text shimmers and wanes, suspended in liquid crystal. At the site of its storage, on “hard” drives, among “floating gates” and ferromagnetic polarities, the text persistently adheres to recondite surfaces, where it multiplies and spreads like silicon dust.2

This inherent duplicity is the cause of conflicting critical accounts. Furthermore, it engenders a fundamental alienation from the material contexts of digital knowledge production. Reading digitally takes place, in part, at quantum scale, beyond the reach of humans senses. Clandestine forces of capital and control subsequently contest such newly found microscopic expanses, thus limiting the scope of possible interpretive activity. Alienation ultimately threatens critical disempowerment. Laminate text is meant to name a structure by which a singular inscription fractures to occupy multiple surfaces and topographies. In this essay, I offer a media archaeology of that structure—that is to say, a history of laminate text coming into being. It proceeds in three parts, after brief theoretical and methodological discussions. In the first layer or “sediment,” we will observe the admixture of natural language and control code, meant for machine consumption. The second is characterized by its increasing opacity: inscription becomes inaccessible to human senses. Finally, a layer of simulated inscription is laid down to restore some of the apparent plasticity lost in the process of lamination.

Literary Composites (Theory)

Allow me to begin with several initial, theoretical observations on the specificity of digital inscription. Little separates ink from paper in print. Ink permeates paper; the conduit embeds into its medium on a molecular level, altering it permanently in the process. The amalgam of ink and paper thereafter entails specific material properties and their related affordances. What the medium is relates to what can be done with it.3 A book printed on high-quality acid-free paper can remain inert for decades and sometimes centuries. I do not mean to restate a fallacy: print is emphatically not a totally stable medium. It is however comparably more stable than magnetic storage and less so than stone etching. At the very least, readers expect print materials to retain their shape as a document passes from one pair of hands into another. Interpreters of texts usually can (with some effort) make certain to find themselves, literally, on the same page, ensuring that their discussion concerns roughly the same piece of writing.

The architecture of contemporary computational media—screens, hard drives, and keyboards—cannot sustain the assumption of fixity. Electromagnetic inscription occupies several available surfaces at once. It exists on screen, itself a composite of glass panes and liquid crystal, and “in memory,” as manifested in the arrangement of silicon and circuitry. The word is in the wires. When reading digitally, on a personal computer for example, the distance between these localities may extend a few inches, enough to cover the space between screen and hard drive. In other contexts, when viewing text on a commercial “electronic book reader,” that space may span continents. Stored data are transmitted over vast distances, between content “owners” and its “users,” who hold only a temporary right to peruse, limited in scope to specific timelines and geographies. An electronic book borrowed from the New York Public Library may be stored in the city or in New Jersey perhaps, on rented library servers. It vanishes from my devices when the terms of my book borrowing expire. I cannot immediately localize such a text with any precision: I know only that it sometimes appears on my screen.

This unwieldy shape is the source of my puzzlement and an occasion to dwell in the discomfort of reading electronically. The digital sign stretches, elongates, and fractures across diverse material strata, subject to distinct local affordances. In some real sense it occupies multiple regions, jurisdictions, and time zones, with segments passing through New York, North Virginia and Ohio; Mumbai, Seoul, and Singapore. It is concurrently onscreen and off.

Digital texts do not always behave in a way one would naturally expect. They engender spooky action at a distance: unsaved drafts, ghost typing, misfiled documents that forever disappear into the ether. They are somewhere, “in storage,” just not where I can find them. A simple act of erasure on screen, as when one backspaces over a word written in error, signifies a comparable action in print. The erasure is a metaphor, however. On disk, it conveys an entirely new set of connotations, not congruent with the action implied on screen.4 The incongruence between what you see and what you get can be benign. Readers and writers are often interested in handling surface representation alone, on the level of words and ideas. In this way, I sometimes tell my students: “I don’t care what edition of the assigned novel you purchase. Read any of them and we will figure out the page numbers later.” In other contexts, our inability to perceive the mechanics of inscription at depth severely undermines critical acuity. Imagine for example a scholar or a journalist who needs to redact unpublished materials in order to protect her sources. Surface, metaphoric erasure is insufficient for these purposes. Words erased on screen persevere through deeper structures on disk and among remote servers, making them susceptible to malicious breach or state-sponsored surveillance. To guarantee anonymity we want to destroy all copies of a text, literally not just metaphorically. But how and where?

The affordances of laminate text depend not only on the medium, but also on the contexts of its reception. The same “source text” may be transformed according to its geography or the identity of its reader. As texts change hands they change themselves. A digital document may respond to a reader’s location, gender, age, ethnicity, or immigration status. Think of an online newspaper, where the very composition of headlines and stories is routinely tailored to individual readers. Such dynamic inscriptions contain rules of their own transformation. Content and code intertwine to produce an amalgamated artifact, designed for remote content control. An electronic “online” book implies a socialized environment, even when consumed in intimacy. Friends, advertisers, censors, and agents of surveillance are always potentially present, in part because digital text is in perpetual motion: moving past and through multiple infrastructures on the way to the iris.

The chain of textual transmission is difficult to reconstitute. Governments that monitor reading habits by judicial means prosecute those who express opinions contrary to the reigning ideology. More insidiously, mechanisms of “soft” censorship can be encoded into the fabric of the laminate itself, through technologies that outright prevent prohibited ideological formations from appearing on screen. Unlike those censored by an explicit edict, readers under the reach of remote, algorithmic governance are not immediately aware of the control mechanisms structuring their everyday interpretive experience. They are left instead with a rough remainder of an imposed identity. A dynamic text has the power to determine its audience: a man will be interested in cars and women, an Eastern European in jewelry and Adidas shoes, an inmate or otherwise an enemy of the people, in a blank page and a home visit from your friendly librarian.

The stratified nature of digital inscription poses obvious challenges in the social sphere, which this essay can address only obliquely. Our ability to mobilize against censorship or surveillance is in peril when such mechanisms operate at a microscopic scale, requiring specialized skills and tools for interpretation. A more general tactical response requires not just the writing of histories, but training and advocacy efforts. More narrowly, laminate texts present a challenge to the practice of literary hermeneutics. How does critical interpretive practice persist in conditions where readers can no longer rely on the continuing stability of the medium? The theoretical problem is not one of binary definitions. A text is neither an immanent object nor a transcendent idea. We are confronted instead with documents that do not converge on a single location and whose multivalence is derived from their structural diffusion. Composite media force us to reconsider long-standing critical assumptions about the physics of inscription at the foundation of hermeneutics.5

Stratigraphy (Methods)

Several parallel literary and media archaeologies were formative in my approach to thinking through the history of laminate inscription. The specter of Friedrich Kittler haunts many studies at the intersection of literary theory and media history, including this one. Kittler’s legacy is complicated however by his sometimes fatalistic pronouncements. “Under the conditions of high technology, literature has nothing more to say,” he wrote in the conclusion of Gramophone, Film, Typewriter.6 Katherine Hayles, Lori Emerson, Lisa Gitelman, Matthew Kirschenbaum, and others have commenced the patient task of mapping the material specifics of these changing conditions.7 Their efforts provided a much-needed materialist corrective of scholarship that initially emphasized the metaphysics of new media.

Yet, the materialist perspective should not be overstated. Writers of a previous generation, such as Michael Heim and Pamela McCorduck, captured an important phenomenological insight when they referred to the “ephemeral quality” of electronic text, calling it “impermanent, flimsy, malleable, [and] contingent.”8 Electricity is truly a mercurial medium. Inscriptions on screen really do flicker and disappear at the loss of power. Our mistake is to treat digital text in analogy to print, conceived along a singular, two-dimensional plane. The concept of laminates, which I borrow from geology, helps reconcile a discrepancy that characterizes the two conflicting traditions in the study of new media.

Electronic text extends in multiple dimensions simultaneously. Its facets afford different views and strategies of interpretation depending on a reader’s vantage. The localized physics of inscription determine many of its specific capabilities. Where paper rapidly degrades under the heel of an eraser, a solid state drive can be erased millions of times before failure. Crucially, the medium is also affected by the physical limitations placed on the reader. A drive sealed or encrypted can be theoretically amenable to a forensic reading, but practically inaccessible. I turn to the field of Science and Technology Studies and particularly to the recent work of Mara Mills, Hans-Jörg Rheinberger, Jessica Riskin, and Nicole Starosielski, among others who in one way or another have inspired me to take a distinctly infrastructural approach to the historical study of such multifaceted epistemic artifacts.9

I further use the concept of “stratigraphy” literally, in contrast to media “archaeology,” where the spatial terms are used in their more evocative, metaphoric sense.10 The art of analyzing sedimentary rock layers dates back at least to the geological observations of the seventeenth century Danish scientist Nicolas Steno, later extended by John Strachey in his Observations on the Different Strata of Earths and Minerals (1727), and to the geological cross-sections of William Smith and William Maclure, who drew beautifully detailed cross-sections of British and American landscapes [Figure 1].

Fig. 1: Stratigraphic map legend: “Sketch of the Succession of Strata and their relative Altitudes.”11

Stratigraphy is an apt borrowing when applied to the history of computer technology, where the various layers of historical development are often extant on one and the same device. In this manner, the legacy of machine alphabets such as the Morse and Baudot alphabets is still present on modern devices through the American Standard Code for Information Interchange (ASCII) and Unicode Transformation Format (UTF) conventions. A “conversational,” text-based model of human computer-interaction developed in the 1960s coexists with the later, graphic-based “direct-interaction” modes of interaction in the innards of every modern mobile phone, game console, or tablet.12 The same can be said about “low-level” assembly languages, continuously in use since the 1950s alongside their modern “high-level,” strongly-abstracted counterparts.

For the purposes of this essay, which concerns the paradoxically conflicting nature of electromagnetic inscription, at once enduring and ephemeral, we may separate the development of electromagnetic composites into three historical periods, each leaving behind a distinctive media sediment. A summary of that history is as follows:

First: with the advance of telecommunications, we observe the increasing admixture of human-readable text and machine-readable code. Removable storage media such as ticker tape and punch cards embodied a machine instruction set meant to influence a mechanism, which in turn produced human-legible inscription. Unintelligible (to humans without special training) machine control code was thereby mixed with plain text, the “content” of communication. The two data streams converged in transmission, and then diverged into separate reception channels. An encoded message could at once mean “hello” to a human recipient, and “switch gears,” to a machine.

Second: whereas ticker tape and punch cards were legible to the naked eye, magnetic tape, a medium which supplanted paper, made for an inscrutable substance, inaccessible directly without instrumentation. In the 1950s and 1960s machine operators worked blindly, using complicated workarounds to verify equivalence between data input, storage, and output. Writing began to involve multiple typings and printings. Specialized magnetic reading devices were developed to establish correspondence between input, storage content, and output of entered text. The physical properties of electromagnetic inscription also allowed for rapid re-mediation. Tape was more forgiving than paper: it could be written and rewritten at high speeds and in volume. The opacity of the medium has also placed it, in practice, beyond human sense.

Finally: the appearance of cathode-ray tube (CRT) displays in the late 1960s restored a measure of legibility lost to magnetic storage. The sign reemerged onscreen in a simulacrum of archived inscription: typing a word on a keyboard produced one sort of a structure on tape or disk and another on screen. The two related contingently, without necessary congruence. The lay reader-writer lost the direct means to secure a correspondence between visible trace and stored mark. An opaque black box of “word processing,” rules for transmediation, began to intercede between simultaneous acts of reading and writing.

In what follows, I have selected three paradigmatic textual artifacts to mark this episodic history: Hyman Goldberg’s Controller, patented in 1911; the Magnetic Reader introduced by Robert Youngquist and Robert Hanes in 1958; and the Display System, introduced by Douglas Engelbart in 1968. Each represents a distinct sediment, which in aggregate comprise contemporary textual laminates. Together, these devices document a story of a fissure: the inscription appears at one plane to the eye, to the hand for editing in another, and yet elsewhere from a machine perspective, for storage and automated processing. The sign attains new capabilities at each stage of its physical transfiguration, from thought to keystroke, pixel, and electric charge: ephemeral in some aspects and enduring in others.

First Sediment: Code and Control

The turn of the twentieth century was a pivotal period in the history of letters. It saw the languages of people and machines enter the same mixed communications stream. Language became automated through the use of artificial alphabets, such as Morse and Baudot codes. The great variety of human scripts could now be reduced to a set of discrete and exactly reproducible characters. The mechanization of type introduced new control characters into circulation, capable of changing machine states at a distance. Initially, such state changes were simple: “begin transmission,” “sound error bell,” or “start new line.” With time, they developed into what we now know as programming languages. Content meant for human consumption thereby parted from code meant to control electromechanical mechanisms. A text stream could “mean” one thing to a human interpreter and another to a machine one. Early remote control capabilities were quickly adapted to automate everything from radio stations to advertising billboards and knitting machines.13 These expanded textual affordances came at a price of legibility. At first, a cadre of trained machine operators was required to translate natural language into machine-transmittable code. Eventually, specialized equipment automated this process, removing humans from the equation.

Hundreds of alphabet systems vying to displace Morse code were devised to speed up automated communications. These evolved from variable-length alphabets such as Morse and Hughes codes, to fixed-length alphabets such as Baudot and Murray codes. The systematicity of the signal – equal length at regular intervals—minimized the “natural” aspects of human language, affect and variance, in favor of “artificial” ones such as consistency and reproducibility.

The fixed-length property of Bacon’s cipher, later implemented in the 5-bit Baudot code, signaled the beginning of the modern era in serial communications. Baudot and Murray alphabets were designed with automation in mind.14 Both dispensed with silent separator signals that distinguished character blocks in Morse code. Signal units were to be divided into letters by count, with every five codes representing a single character. Temporal synchronization was therefore easier to achieve, given the receiver’s ability to read the message from the start of a transmission.

Fixed-length signal alphabets drove the wedge further between human and machine communication. Significantly, automated printing telegraphs decoupled information encoding from its transmission. The encoding of fixed-length messages could be done in advance, with more facility and in volume. Prepared messages could then be fed into a machine without human assistance. In 1905 Donald Murray wrote that the “object of machine telegraphy [is] not only to increase the saving of telegraph wire […] but also to reduce the labour cost of translation and writing by the use of suitable machines.”15 Baudot and Murray alphabets were not only more concise but also simpler and less error-prone to use.

With the introduction of mechanized reading and writing techniques, telegraphy split from telephony to become a means for truly asynchronous communication. It displaced signal transmission in time as it did in space. The essence of algorithmic control, amplified by remote communication devices, lies in its ability to delay execution. A cooking recipe, for example, allows novice cooks to follow instructions without the presence of a master chef. Similarly, delayed communication could happen in absentia, according to predetermined rules and instructions. A message could instruct one machine to sell a stock and another to respond with a printed confirmation.

Just as perforated music sheets displaced the act of live music performance in time, programmable media deferred the act of inscription. Scribes became programmers. One could strike a key in the present, only to feel the effects of its impact later, at a remote location. Decoupled from its human sources, inscription was optimized for efficiency. Electromechanical readers ingested prepared ticker tape and punch cards at rates far exceeding the possibilities of hand-operated Morse telegraphy.

Besides encoding language, the Baudot schema included several special control characters. The “character space” of an existing alphabet could be expanded by switching a receiving mechanism into a special “control mode,” in which every combination of five bits represented an individual control character, instead of a letter. In this manner, human content and machine control occupied the same spectrum of communication, eventually diverging into separate reception channels.

By the 1930s, devices variously known as printer telegraphs, teletypewriters, and teletypes displaced Morse code telegraphy as the dominant mode of commercial communication. A 1932 U.S. Bureau of Labor Statistics report estimated a more than 50 percent drop in Morse code operators between 1915 and 1931. Morse operators referred to teletypists on the sending side as “punchers” and those on the receiving side as “printer men.”16 The printer men responsible for assembling pages from ticker tape were called “pasters” and sometimes, derisively, “paperhangers.”17

Teletype technology automated the entire process of transmission, rendering punchers, pasters, and paperhangers obsolete. Operators could enter printed characters directly into the machine, using a keyboard similar to the typewriter, which, by that time, was widely available for business use. A teletype machine would then automatically transcode the input into transmitted signal and then back from the signal onto paper on the receiving end.

Control codes thus occupied the gray area between “plain text” and “cipher,” in both the legal and technical sense of the terms that were governed by international treaties guaranteeing the passage of only those communications encoded in plain text. According to conventions, code was considered intelligible only when the schema for its decoding was available to the transmitter.18 Without such keys, a transmitted message was considered secret and therefore not subject to free passage across national boundaries. In practice, the proliferation of encodings and machine instructions had the effect of selective illiteracy, if not outright secrecy. Telegraph operators routinely used multiple cheat sheets to help decipher encoded messages. In this sense, telegraphy reintroduced a pre-Lutheran problem of legibility into human letters. To speak in telegraph was to learn arcane encodings, not spoken in the vernacular.

A number of failed communication schemas consequently attempted to bridge the rift between human and machine alphabets. “You must acknowledge that this is readable without special training,” Hymen Goldberg wrote in the patent application for his 1911 Controller.19 The device was meant “to provide [a] mechanism operable by a control sheet which is legible to every person having sufficient education to enable him to read.” In an illustration attached to his patent, Goldberg pictured a “legible control sheet […] in which the control characters are in the form of the letters of the ordinary English alphabet.”20 Goldberg’s perforations did the “double duty” of carrying human-readable content and mechanically manipulating machine “blocks,” “handles,” “terminal blades,” and “plungers.”21 Unlike other schemas, messages in Goldberg’s alphabet could be “read without special information,” effectively addressing the problem of code’s apparent unintelligibility [Figure 2].22 At the surface of Goldberg’s control sheets, the inscription remained visible and un-encoded. It cut a perforated figure, rendered in English alphabet, which, incidentally, also effected a mechanical response.

Fig. 2: Goldberg’s control cards. Machine and human languages coincide on the same surface. The perforations that actuate levers can also be read “without special training,” in contrast to other text encodings.23

Whatever challenges punch cards and ticker tape presented for readers, these were soon complicated by the advent of magnetic tape. Goldberg’s perforations were at least visible through the paper conduit. The magnetic mark that followed was not only in code, but also illegible to the naked eye.

Second Sediment: Magnetic Storage

Goldberg’s Controller and similar devices illustrated a growing concern with the comprehensibility of machine alphabets. One could hardly call early programmable media ephemeral. Anecdotes circulate about Father Roberto Busa, an early pioneer of computational philology, who in the 1960s carted piles of punch cards around Italy in the back of his pickup truck.24 Codified inscription, before its electromagnetic period, was fragile and unwieldy. Making an error on ticker tape or punch card entry required cumbersome corrections and sometimes wholesale reentry. Once mispunched, a card was near ruined, although it was still possible to fix minor errors with a bit of glue. Embossed onto ticker tape or punched into the card, early software protruded through the medium. Morse code and similar alphabet conventions left a visible mark on the paper. They were legible if not always intelligible.

Magnetic tape changed the commitment between inscription and medium. It gave inscription a temporary shelter, where it could dwell lightly and be transformed, before finding its more permanent, final shape on paper. A new breed of magnetic storage devices allowed for the manipulation of words in “memory,” on a medium that was easily erased and rewritten. Magnetic charge adhered lightly to tape surface. This light touch gave words their newfound ephemeral quality. It also made inscription physically illegible. At the 1967 Symposium on Electronic Composition in Printing, Jon Haley, staff director of the Congressional Joint Committee on Printing, spoke of “compromises with legibility [that] had been made for the sake of pure speed in composition and dissemination of the end product.”25

In applications such as law and banking, where fidelity between input, storage, and output was crucial, the immediate illegibility of magnetic storage posed a considerable engineering challenge. After the advent of teletype but before cathode ray tubes, machine makers used a variety of techniques to restore a measure of congruence between magnetic inscription and its paper representation. What was entered had to be continually verified against what was stored.

The principles of magnetic recording were developed by Oberlin Smith (among others), an American engineer who also filed several patents for inventions related to weaving looms. In 1888, inspired by Edison’s mechanical phonograph, Smith made public his experiments with an “electrical method” of sound recording using a “magnetized cord” (cotton mixed with hardened steel dust) as a recording medium. These experiments were later put into practice by Valdemar Poulsen of Denmark, who patented several influential designs for a magnetic wire recorder.26

Magnetic recording on polyester tape or coated paper offered several distinct advantages over mechanical perforation: tape was more durable than paper, it could fit more information per square inch, and it was reusable. In 1948, Marvin Camras, a physicist with the Armour Research Foundation wrote about the advantages of magnetic recording, which “may be erased if desired, and a new record made in its place.”27

Most early developments in magnetic storage were aimed at sound recording. Engineers at the time did however imagine their work in dialog with the long history of letters, and not just sound. In an address to the Franklin Institute on December 16, 1908, Charles Fankhauser, the inventor of the electromagnetic telegraphone, spoke as follows, comparing magnetic recording to the invention of the Gutenberg press:

It is my belief that what type has been to the spoken word, the telegraphone will be to the electrically transmitted word […] As printing spread learning and civilization among the peoples of the earth and influenced knowledge and intercourse among men, so I believe the telegraphone will influence and spread electrical communication among men.28

In that speech Fankhauser also noted the evanescence of telegraph and telephone communications. The telephone, he complained, fails to preserve “an authentic record of conversation over the wire.”29 By contrast, Fankhauser imagined his telegraphone being used by

the sick, the infirm, [and] the aged […] A book can be read to the sightless or the invalid by the machine, while the patient lies in bed. Lectures, concerts, recitations—what one wishes, may be had at will. Skilled readers or expert elocution teachers could be employed to read into the wires entire libraries.30

Anticipating the popularity of twenty-first-century audio formats like podcasts and audiobooks, Fankhauser spoke of “tired and jaded” workers who would “sooth [themselves] into a state of restfulness” by listening to their favorite authors.31 Fankhauser saw his “electric writing” emerge as “clear” and “distinct” as “writing by hand,” “an absolutely legal and conclusive record.”32 His statements give contemporary readers a sense of the excitement that surrounded advances in early electromagnetic storage technology.

In 1909, Fankhauser thought of magnetic storage primarily as an audio medium, which combined the best of telegraphy and telephony. Magnetic technology for data storage did not mature until the 1950s, when advances in composite plastics made it possible to manufacture tape that was cheaper and more durable than its paper or cloth alternatives.33 The state-of-the-art relay calculator, commissioned by the Bureau of Ordinance of the Navy Department in 1944 and built by the Computation Laboratory at Harvard University in 1947, still made use of standard-issue telegraph “tape readers and punchers” adapted for computation with the aid of engineers from Western Union Telegraph Company.34 It was equipped with a number of Teletype Model 12A tape readers and Model 10B perforators, using 11/16-inch-wide paper tape, partitioned into “five intelligence holes,” where each quantity entered for computation took up thirteen lines of code.35 Four Model 15 Page-Printers were needed to compare printed characters with the digits stored in the print register on ticker tape. The numerical inscription in this setup was therefore already split between input and output channels, with input stored on ticker tape and output displayed in print.

The Mark III Calculator, which followed Computation Laboratory’s earlier efforts, was also commissioned by the Navy’s Bureau of Ordinance. It was completed in 1950. Its “floor plan” (or “system architecture,” in modern terms) did away with punch cards and ticker tape, favoring instead an array of large electromagnetic drums coupled with reel-to-reel tape recorders. The drums, shellacked by hand, were limited in their storage capacity, but could revolve at much faster speeds than the tape reels. They were thus used for fast, temporary internal storage. A single Mark III calculator used twenty-five such drums, rotating at 6,900 rpm, each capable of storing 240 binary digits. 36

In addition to the fast “internal storage” drums, the Mark III floor plan included eight slow “external storage” tape-reader mechanisms. Tape was slower than drums but also cheaper. It easily extended to multiple reels, thus approaching the architecture of an ideal Turing machine, which called for tape of “infinite length.”37 In practice, tape was in limited supply, available in segments long enough to answer the needs of military computation. Unlike stationary drums, tape was also portable. Operators could prepare machine instruction in advance, in a different room, at the allotted “instructional tape preparation table.”

Since it was impossible to transfer data from the slow-moving tape reel to the fast drums directly, information on tape was transferred first to an intermediary variable-speed drum, which could then be accelerated to match the higher rotating speeds of the more rapid internal storage drums for yet another transfer. The Mark III Calculator was further equipped with five printers “for presenting computed results in a form suitable for publication.” The printers were used to set the “number of digits to be printed, the intercolumnar and interlinear spacing, and other items related to the typography of the printed page.”38 Even a simple act of calculation on Mark III involved multiple materials, encodings, and movements across diverse media. In reflecting on these early supercomputers, one imagines the pathway of a single character as it crosses surfaces, through doorways and interfaces, gaining new shapes and temporalities with each transition.

To enter the stream of calculations, numbers had to be transcribed from paper to binary electromagnetic notation. For this task operators sat at the “numerical tape preparation table,” yet another separate piece of furniture. Data were stored along two channels, running along tape length. Tape preparators worked blindly, unable to see whether their intended marks registered properly upon first entry. To prevent errors, each number had to be entered twice, first into channel A and then into channel B. An error bell sounded when the first quantity did not match the second, in which case the operator would reenter the mismatched digits. To ensure “completely reliable results,” the machine manual additionally encouraged the use of one of its five attached Underwood electric teletypes, also attached to the machine. A paper printout of all channels helped confirm magnetic input visually.39

Readers not interested in additional case studies from the period can advance to the penultimate paragraph of this section, where I pick up on the theoretical consequences of magnetic stratification. The point of this history, already apparent in the Mark III case study, is to document the rapid proliferation of writing surfaces. Where we started with a single flat layer of ink and paper, we conclude with a literal palimpsest of inscriptions, evident in the schematics of mid-century computers and typewriters.

Advances in magnetic storage found their way into small businesses and home offices a decade later. In 1964, IBM combined magnetic tape (MT) storage with its Selectric line of electric typewriters (ST). Selectric typewriters were popular because they were ubiquitous, relatively inexpensive, and could be used to reliably transform the mechanical action of a keyboard into binary electric signals. Consequently, Selectrics became a common input interface in a number of early computing platforms (floor plans).40 The MT/ST could be considered one of the first personal “word processors” in that it combined electromagnetic tape storage with keyboard input. Where typists previously had to stop and erase every mistake, the IBM MT/ST setup allowed them to “backspace, retype, and keep going.” Mistakes could be corrected in place, on magnetic tape, “where all typing is recorded and played back correctly at incredible speed.”41

Despite its advantages, the MT/ST architecture inherited the problem of legibility from its predecessors. Information stored on tape was still invisible to the typist. In addition to being encoded, electric alphabets were written in magnetic domains and polarities, which lay beyond human sense.42 One still had to verify input against stored quantities to ensure their congruence. However, the stored quantity could be checked against input only by transforming it yet again into another inscription. To verify what was stored the operator was forced to redouble the original inscription, in a process that was prone to error, because storage media still could not be accessed directly without specialized instrumentation.43

Another class of solutions to the legibility problem involved making the magnetic mark more apparent. A pair of American inventors described their 1962 magnetic reader as “a device for visual observation of magnetic symbols recorded on a magnetic recording medium in tape or sheet form.” They wrote:

Magnetic recording tape is often criticized because the recorded signals are invisible, and the criticism has been strong enough to deny it certain important markets. For example, this has been a major factor in hampering sales efforts at substituting magnetic recording tape and card equipment for punched tape and card equipment which presently is dominant in automatic digital data-handling systems. Although magnetic recording devices are faster and more troublefree, potential customers have often balked at losing the ability to check recorded information visually. It has been suggested that the information be printed in ink alongside the magnetic signals, but this vitiates major competitive advantages of magnetic recording sheet material, e.g., ease in correction, economy in reuse, simplicity of equipment, compactness of recorded data, etc.44

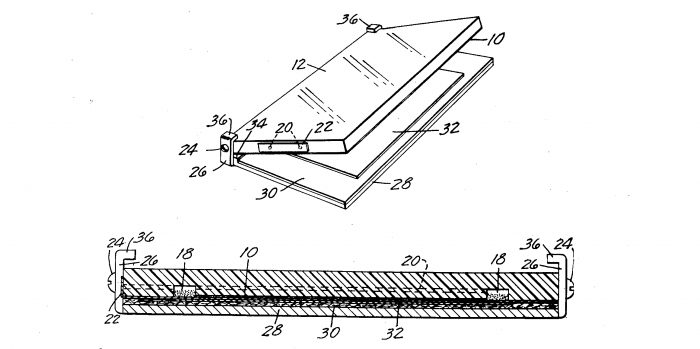

The Magnetic Reader was supposed to redress the loss of visual acuity. The device consisted of two hinged plates, filled with a transparent liquid that would host “visible, weakly ferromagnetic crystals” [Figure 3]. Magnetic tape could then be sandwiched between the plates to reveal the “visible outlines” of the otherwise inaccessible inscription.45

Fig. 3: “Magnetic recording tape is often criticized because the recorded signals are invisible.” Youngquist and Hanes imagined a device that physically reveals the magnetic inscription.46

Devices like the Magnetic Reader attempted to reconcile a fundamental incongruence between paper and tape. Data plowed into rows on the wide plains of a broad sheet had to be replanted in columns along the length of a narrow plastic groove. Some loss of fidelity was inevitable.

To aid in the transformation between media, the next crop of IBM Magnetic Selectric (MS) typewriters added a “composer control unit,” designed to preserve some of the formatting lost in transition between paper and plastic. Tape had no concept of margins or page width. The MS control unit could remember and change page margin size or justify text “in memory.” This was no easy task. The original IBM Composer unit justified text (its chief innovation over the typewriter) by asking the operator to type each line twice: “one rough typing to determine what a line would contain, and a second justified typing.”47 After the first typing, an indicator mechanism calculated the variable spacing needed to achieve proper paragraph justification. The formatting and content of each line thus required separate input passes to achieve the desired result in print. The sign was fractured again for these purposes.

IBM’s next-generation Magnetic Tape Selectric Composer (MT/SC) built on the success of its predecessors. It combined the Selectric keyboard, magnetic tape storage, and a “composer” format control unit. Rather than having the operator type each line twice, the MT/SC system printed the entered text twice: once on the input station printout, which showed both content and control code in red ink, and a second time, into the final “Composer output printout,” which collapsed the layers into the final typeset copy. Output operators still manually intervened to load paper, change font, and include hyphens. The monolithic page unit was thereby further systematically deconstructed into distinct strata of content and formatting.

Like other devices of its time, the IBM MT/SC suffered from the problem of indiscernible storage. Error checking of input using multiple printouts was aided by a control panel consisting of eleven display lights. The machine’s manual suggested that the configuration of lights be used to peek at the underlying data structure for verification.48

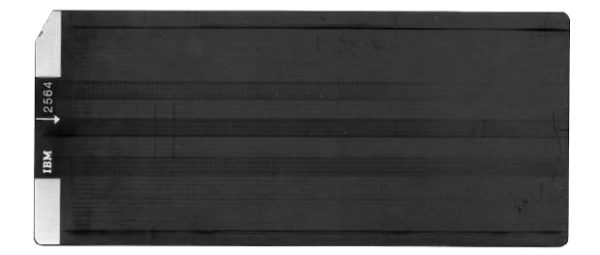

In an attempt to achieve ever greater congruence between visible outputs and data archived on a magnetic medium, IBM briefly explored the idea of using magnetic cards instead of tape. On tape, information had to be arranged serially, into one long column of codes. Relative arrangement of elements could be better preserved, it was thought, on a rectangular magnetic card, which resembled paper in its proportions. The 1968 patent “Data Reading, Recording, and Positioning System” described a method for arranging information on a storage medium “which accurately positions each character recorded relative to each previous character recorded.”49 In 1969, IBM released a magnetic card-based version of its MT/ST line, dubbed the MC/ST (magnetic card, Selectric type). Fredrick May, whose name often appears on word-processing-related patents from this period, would later reflect that a “major reason for the choice of a magnetic card for the recording of medium was the simple relationship that could be maintained between a typed page and a recorded card.” The card approximated a miniature page, making it a suitable “unit of record of storage for a typed page.”50 Although it offered a measure of topographic analogy between tape and paper, the “mag card” was short-lived partly because of its limited storage capacity, capricious feeding mechanism, and persistent inscrutability.51

Fig. 4: IBM Mag Card II, introduced in 1969 for use in the Magnetic Card/Selectric Typewriter (MC/ST) in 1969. A simple relationship could be “maintained between a typed page and a recorded card.”52

For a few decades after the advent of magnetic storage media but before the arrival of screen technology, the sign’s outward shape disappeared altogether. It is difficult to fathom now, but at that time—after the introduction of magnetic tape in the 1960s but before the widespread advent of CRT displays in the 1980s—typewriter operators and computer programmers manipulated text blindly. Attributes such as indent size and justification had to be specified before ink was committed to paper.

In the 1980s, an engineer thus reflected on the 1964 MT/ST’s novelty: “It could be emphasized for the first time that the typist could type at ‘rough draft’ speed, ‘backspace and strike over’ errors, and not worry about the pressure of mistakes made at the end of the page.” The MT/SC further added a “programmable control unit” to separate inputs from outputs. The “final printing” was accomplished by a protracted process of remediation, involving:

mounting the original tape and the correction tape, if any, on the two-station reader output unit, setting the pitch, leading, impression control and dead key space of the Composer unit to the desired values, and entering set-up instructions on the console control panel (e.g., one-station or two-station tape read, depending on whether a correction tape is present; line count instructions for format control and space to be left for pictures, etc.; special format instructions; and any required control codes known to have been omitted from the input tape). During printing the operator changes type elements when necessary, loads paper as required, and makes and enters hyphenation decisions if justified copy is being printed.53

Tape reels and control units intervened between keyboard and printed page. The “final printing” combined “prepared copy,” “control and reference codes,” and “printer output.”54 Historical documents often mention three distinct human operators for each stage of production: one entering copy, one responsible for control code, and one handling paper output.

Researchers working on these early IBM machines considered the separation of print into distinct strata a major contribution to the long history of writing. One IBM consultant went so far as to place the MT/SC at the culmination of a grand “evolution of composition,” which began with handwriting and continued to wood engraving, movable type, and letterpress. “The IBM Selectric Composer provides a new approach to the printing process in this evolution,” he wrote and concluded by heralding nothing less than the “IBM Composer era,” in which people would once again write books “without the assistance of specialists.”55 Inflationary marketing language aside, the separation of the sign from its immediate material contexts and its new composite constitution must be considered a major milestone in the history of writing.

The move from paper to magnetic storage had tremendous social and political consequences for the republic of letters. Magnetic media reduced the costs of copying and dissemination of the word, freeing it, in a sense, from its more durable material confines. The affordances of magnetic media—its very speed and impermanence—created the illusion of ephemeral lightness. Yet the material properties of magnetic tape itself continued to prevent direct access to the site of inscription. Magnetic media created the conditions for a new kind of illiteracy, which divided those who could read and write at the site of storage from those who could only observe its aftereffects passively, at the shimmering surface of archival projection.

The schematics unearthed in this section embody textual fissure in practice. The path of a signal through the machine has led us to a multiplicity of inscription sites. These are not metaphoric but literal localities that stretch the sign across manifold surfaces. Whereas pens, typewriters, and hole punches transfer inscription to paper directly, electromagnetic devices compound them obliquely into a laminated aggregate. The propagation of electric signal across space necessitated numerous phase transitions between media: from one channel of tape to another, from tape to drum, from a slow drum to a fast one, and from drum and tape to paper. On paper, the inscription remained visible in circulation. It disappeared from view on tape, soon after key press. Submerged beneath an opaque facade of ferric oxide, inscriptions thicken and stratify into laminates.

Third Sediment: Display System

To recapitulate the exposed strata so far: in the first sediment, we saw the convergence of machine and human languages. A layer of electromagnetic storage supplanted ticker tape and punch card, in a proliferation of writing surfaces. Magnetic storage was “lighter,” faster, more portable and more malleable than print or punch. Ferric oxide became the preferred medium for digital storage. This new memory layer also lay beyond the immediate reach of human senses. It was “weighty” in another sense, difficult to access or manipulate.

Screens added a much needed window onto an opaque layer of dense deposits. On screen, the topography of electromagnetic storage could be represented visually, obviating the need for double entry or frequent printouts. Screens interjected to mediate between input and output. The stratified complexity of a laminate medium was once again flattened, presenting a single, unified surface: for example, the “print view” of many contemporary word processors. This arrangement of devices took its present form in the late 1960s. Computers eventually became smaller, faster, cheaper, and more ubiquitous. However, they retain the same arrangement of technologies, which, until the advent of “direct” brain-to-computer interfaces, will continue to involve screens suspended above a layer of electromagnetic inscription.

On December 9, 1968, Douglas Engelbart, then the primary investigator at the NASA- and ARPA-funded Augmentation Research Center at the Stanford Research Institute, gave what later became known as the “mother of all demos” to an audience of roughly 1,000 or so computer professionals attending the Joint Computer Conference in San Francisco. The demo announced the arrival of almost every technology prophesied by Vannevar Bush in his influential 1945 Atlantic essay, “As We May Think.” During his short lecture, Engelbart presented functional prototypes of the following: graphical user interfaces, video conferencing, remote camera monitoring, links and hypertext, version control, text search, image manipulation, windows-based user interfaces, digital slides, networked machines, mouse, stylus, and joystick inputs, and “what you see is what you get” (WYSIWYG) word processing.56

In his report to NASA, Engelbart characterized his colleagues as a group of scientists “developing an experimental laboratory around an interactive, multiconsole computer-display system” and “working to learn the principles by which interactive computer aids can augment the intellectual capability of the subjects.”57 Cathode Ray Tube (CRT) displays were integral to this research mission. In one of the many patents that came out of his “intellect augmentation” laboratory, Engelbart described a “display system,” which included a typewriter, a CRT screen, and a mouse. The schematics show the workstation in action, with the words “now is the time fob” prominently displayed on-screen. The user was evidently in the process of editing a sentence, likely to correct the nonsensical “fob” into “for” [Figure 5].58

Fig. 5: Schematics for Engelbart’s “Display System.” The arrangement of keyboard, mouse, and screen will define an epoch of human-computer interaction.59

Reflecting on the use of visual display systems for human-computer interaction, Engelbart wrote, “One of the potentially most promising means for delivering and receiving information to and from digital computers involves the display of computer outputs as visual representations on a cathode ray tube and the alteration of the display by human operator in order to deliver instructions to the computer.”60

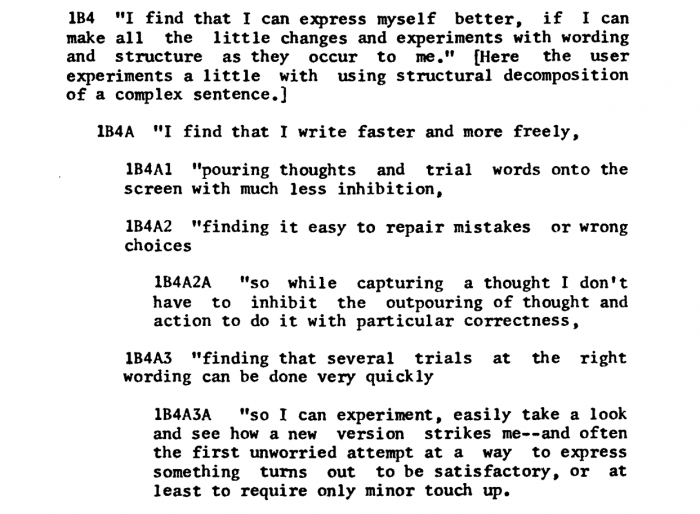

People reading and writing onscreen for the first time reported an intense feeling of freedom and liberation from paper. An anonymous account included in Engelbart’s report offered the following self-assessment:

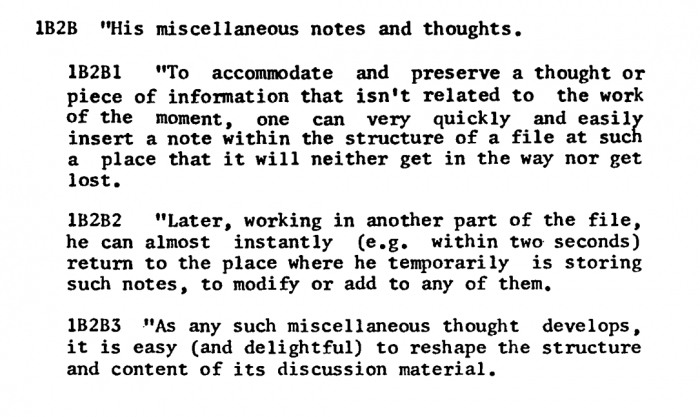

Fig. 6.61

Writing, which this typist previously perceived as an ordered and continuous activity, subsequently was performed in a more free-form and disjointed way. The typist could delight in shaping paragraphs that more closely matched her mental activity. Screens restored some of the fluidity of writing that multiple-entry typewriters denied. Writers could pursue two thoughts at a time, documenting both in different parts of the file as one would in a notebook. Not constrained by the rigidity of a linear mechanism, they moved around the document at will.

Engelbart recorded what must count as some of the most evocative passages to appear in a NASA technical report. His “Results and Discussion” section contains the following contemplation by an anonymous typist. Numbered passages along with unexpected enjambment heighten the staccato quality of prose, which attains an almost lyrical quality:

Fig. 7.62

The lines remind us that an immutable commitment to a medium, which writers take for granted in stone or on paper, is costly. Ink dries; stone tables are not appropriate for editing drafts. An eraser or a chisel can help. However, the use of these tools requires a strenuous effort, producing waste and damaging the writing surface. Engelbart’s anonymous typist reports a feeling of freedom from such materialistic concerns. She can simply backspace and start over. Screen writing is not permanent. Words come easily because there are no penalties for being wrong. Virtual space seems to her limitless and endlessly pliable. The typist concludes to write:

Finding that where I might otherwise hesitate in search of the right word, I now pour out a succession of potentially appropriate words, leaving them all there while the rest of the statement takes shape. Then I select from among them, or replace them all, or else merely change the list a bit and wait for a later movement of the spirit.63

That feeling of physical transcendence—the very ephemeral quality of digital text—is tied directly to the underlying affordances of electromagnetic storage. Content can be addressed in memory and copied at a key stroke. The numbered paragraphs suggest a novel system for composition, reading, and recall. The writer describes her data storage units in terms of perceived, mental units which more closely track the flow of her thought, which similarly carry no penalties to changing one’s mind.

I am struck by the distinctly phenomenological quality of the reported findings. The screen does not merely resemble a page – it creates a new way of thinking in the mind of the writer. The highly hierarchical paragraph structure, along with its repetitive refrain – “finding” and “I find that” – gives the prose a hypnotic drive forward. The cadence matches the experience of fluent discovery.

The passages appear too contrived to be spontaneous. The informal prose nevertheless advances many of the key elements of Engelbart’s research program, which aimed to develop new data structures in combination with new ways of displaying them. Engelbart’s research into intellect augmentation created tools that augment research. In an image that evokes Baron Münchhausen pulling himself out of a swamp by his own bootstraps, Engelbart called his group’s methodology “bootstrapping,” which involved the recursive strategy of “developing tools and techniques” to develop better tools and techniques.64 The “tangible product” of such an activity was a “constantly improving augmentation system for use in developing and studying augmentation systems.”65

It was an appealing vision, so long as it remained recursive. Engelbart’s group benefited from creating their own tools and methods. Engelbart also hoped that his system could be “transferred—as a whole or by pieces of concept, principle and technique—to help others develop augmentation systems for many other disciplines and activities.”66 His ideas about intellect augmentation have had a broad impact on knowledge work across disciplines. Outside the narrow confines of an active research laboratory his vision loses the property of self-determination. Contemporary readers rarely make their own tools. Augmentation enforced from without advances values and principles no longer comprehensible to the entity being augmented.

To bring his system into being, Engelbart convened a group that through recursive self-improvement could lift itself up toward a smarter, more efficient, more human way of doing research. The group crafted novel instruments for reading and writing. They engineered new programming languages, compilers to interpret them, and debuggers to troubleshoot them. The system showed care and love for the craft of writing. It also introduced great complexity. “This complexity has grown more than expected,” Engelbart wrote in conclusion of his report to NASA.67 The feeling of transcendence that the anonymous typist described in using the system engaged a sophisticated mechanism. The mechanism was not, however, the primary instrument of intellect augmentation. Rather, enhancement lay in the process of designing, making, and experimenting with writing tools. The process was ultimately more important than its product.

Engelbart wrote, “The development of the Bootstrap Community must be coordinated with the capacity of our consoles, computer service, and file storage to support Community needs, and with our ability to integrate and coordinate people and activities.”68 In other words, the development of the community formed a feedback loop with software development. It involved training, practice, critical self-reflection, and thoughtful deliberation.

Modern word processors allow us to drag and drop passages with unprecedented facility. We live in Engelbart’s world to the extent that we routinely use technology created by his lab. But the vision of an empowered, self-experimenting computer user never quite materialized. Contemporary writers are bootstrapped passively to the prevailing vision of intellect augmentation. Engelbart’s research program thus left another less lofty imprint on the everyday practice of modern intellectual life. Text, which before the advent of CRTs was readily apparent on the page, entered a complex system of executable code and inscrutable control instruction. The material lightness of textual being came at the price of legibility: complexity has grown more than expected.

Short-lived screenless word processors of the early 1960s (as was the IBM MT/ST) were difficult to operate, because typists had no means to visualize complex data structures on tape. Screens helped by representing document topography visually, restoring a sense of apparent space to an otherwise featureless medium. Contemporary digital documents resemble pages onscreen. Screens simulate document unity by presenting holistic images of paragraphs, pages, and books.69 The simulation seems to follow the physics of paper and ink: One can turn pages, write in margins, and insert bookmarks. The underlying inscription remains in fracture. Simulated text does not transcend matter. Screens merely conceal their material properties while recreating others, more seemingly transcendent ones. The act of continual dissemblage – one medium imitating the other – manufactures an ephemeral illusion by which pages fade in and out of sight, paper folds in improbable ways, and words glide effortlessly between registers.

In the rift between input and output, programmable media inject arbitrary intervals of time and space. Forces of capital and control occupy the void as the sign acquires new dimensions and capabilities for automation. Code and codex finally sink beneath the matte surface of a synthetic storage medium. Screens purport to restore a sense of lost immediacy, of the kind felt on contact between pen nib and paper as the capillary action of cellulose conveys ink into its shallow conduit. Patently the screen is not a page. A laminate page recreates print, just as it obscures the shape of a dimensional figure. To mistake this flat surface for the inscription in full is to preclude the analysis of mediation itself.

Screens were meant to open a window onto the unfamiliar physicalities of electromagnetic inscription. In that, they obviated the need for multiple typings and printouts. Projected image should, in theory, correspond to its originating keystroke. The gap separating inputs and outputs appears to close. Crucially, the accord between archived inscription and its image cannot be guaranteed. The interval persists in practice and is actively contested. Deep and shallow inscriptions entwine. Laminate text seems weightless and ephemeral at some layers of the composite, allowing for rapid remediation. At other layers its affordances are determined by its physics; at still other strata they are carefully constructed to resist movement or interpretation. Alienated from the base particulates of the word, we lose some of our basic interpretive capacities to interrogate embedded power structures. The history of digital text is one of its gradual disappearance and the rise of a simulation.70

Wendy Hui Kyong Chun, “The Enduring Ephemeral, or the Future Is a Memory,” Critical Inquiry 35, no. 1 (2008): 148. ↩

I extend my gratitude to Susan Zieger and Priti Joshi for their expert editorial guidance. ↩

See Caroline Levine, Forms: Whole, Rhythm, Hierarchy, Network (Princeton, NJ: Princeton University Press, 2015), 6-11. Levine explains: “Affordance is a term used to describe the potential uses or actions latent in materials and designs. Glass affords transparency and brittleness. Steel affords strength, smoothness, hardness and durability. Cotton affords fluffiness, but also breathable cloth when it is spun into yarn and thread” (6). ↩

On the persistence of erased data see Matthew Kirschenbaum, Mechanisms: New Media and the Forensic Imagination (MIT Press, 2008), 50. ↩

See for example Hans-Georg Gadamer, Truth and Method(New York: Seabury Press, 1975), 110: “In both legal and theological hermeneutic there is an essential tension between the fixed text—the law or the gospel—on the one hand and, on the other, the sense arrived at by applying it at the concrete moment of interpretation.” See also Paul Ricoeur, Interpretation Theory: Discourse and the Surplus of Meaning (Fort Worth: Texas Christian University Press, 1976), 28. ↩

Friedrich Kittler, transl. Geoffrey Winthrop-Young and Michael Wutz, Gramophone, Film, Typewriter(Stanford, CA: Stanford University Press, 1999), 263. ↩

See Lori Emerson, Reading Writing Interfaces: From the Digital to the Bookbound, (Minneapolis: University Of Minnesota Press, 2014); Matthew Fuller, Media Ecologies: Materialist Energies in Art and Technoculture (Cambridge, MA: MIT Press), 2007; Lisa Gitelman, Paper Knowledge: Toward a Media History of Documents (Durham, NC: Duke University Press, 2014); Katherine Hayles, Writing Machines (Cambridge, MA: MIT Press, 2002). ↩

Pamela McCorduck tells the story of a rabbinate court, which, when interpreting a law that prohibits observant Jews from erasing God’s name, deemed that words on a screen do not constitute writing, and therefore sanctioned their erasure. See Pamela McCorduck, The Universal Machine: Confessions of a Technological Optimist (New York: McGraw-Hill, 1985); Michael Heim, “Electric Language: A Philosophical Study of Word Processing” (New Haven: Yale University Press, 1987), 192. ↩

See Mara Mills, “Deafening: Noise and the Engineering of Communication in the Telephone System,” Grey Room (April 1, 2011): 118–43; Hans-Jörg Rheinberger, Toward a History of Epistemic Things: Synthesizing Proteins in the Test Tube (Stanford, CA: Stanford University Press, 1997); Jessica Riskin, “The Defecating Duck, Or, the Ambiguous Origins of Artificial Life,” Critical Inquiry 29, no. 4 (June 1, 2003): 599–633; Nicole Starosielski, The Undersea Network (Durham, NC: Duke University Press Books, 2015). ↩

See, for example, Erkki Huhtamo and Pekka Parikka, Media Archaeology: Approaches, Applications, and Implications (Berkeley, CA: University of California Press, 2011),3: “Media Archaeology should not be confused with archaeology as a discipline. When media archaeologists claim they are ‘excavating’ media-cultural phenomena, the word should be understood in a specific way.” See also Grant Wythoff, “Artifactual Interpretation,” Journal of Contemporary Archaeology 2, no. 1 (April 24, 2015): 23–29. On the use of stratigraphy related to hard drive forensics see Sara Perry and Colleen Morgan, “Materializing Media Archaeologies: The MAD-P Hard Drive Excavation,” Journal of Contemporary Archaeology 2, no. 1 (April 24, 2015): 94–104. ↩

William Smith, “DELINEATION of the STRATA of ENGLAND and WALES with part of SCOTLAND; exhibiting the COLLIERIES and MINES; the MARSHES and FEN LANDS ORIGINALLY OVERFLOWED BY THE SEA; and the VARIETIES of Soil according to the Variations in the Sub Strata; ILLUSTRATED by the MOST DESCRIPTIVE NAMES,” Section 16 (1815). Image in the public domain. Reproduced from the collection of Sedgwick Museum of Earth Sciences, University of Cambridge. ↩

For periodization of computer systems see Peter Denning, “Third Generation Computer Systems,” ACM Computing Surveys 3, no. 4 (December 1971): 175–216. ↩

Alfred Adler and Harry Albertman, “Knitting Machine,” patent US1927016A filed August 1, 1922, and issued September 19, 1933; Louis Casper, “Remote Control Advertising and Electric Signaling System,” patent US1953072A filed September 9, 1930, and issued April 3, 1934; Clinton Hough, “Wired Radio Program Apparatus,” patent US1805665A filed April 27, 1927, and issued May 19, 1931. ↩

The Australian Donald Murray improved on the Baudot system to minimize the amount of holes needing to be punched, allotting fewer perforations to common English letters. See Donald Murray, “Setting Type by Telegraph,” Journal of the Institution of Electrical Engineers 34, no. 172 (May 1905): 567. ↩

Murray, “Setting Type,” 557. ↩

According to the U.S. Bureau of Labor Statistics, women made up 24 percent of the Morse operators in 1915 (before the widespread advent of automated telegraphy). By 1931 women made up 64 percent of printer and Morse manual operators. See U.S. Bureau of Labor Statistics, “Displacement of Morse Operators in Commercial Telegraph Offices,” Monthly Labor Review 34, no. 3 (March 1, 1932): 514. ↩

Hervey Brackbill, “Some Telegraphers’ Terms,” American Speech 4, no. 4 (April 1, 1929): 290. ↩

International Telegraph Union, Telegraph Regulations and Final Protocol (Madrid: International Telegraph Union, 1932), 12-13. ↩

Hyman Eli Goldberg, “Controller,” patent US1165663A filed January 10, 1911, and issued December 28, 1915: sheet 3. ↩

Goldberg, “Controller,” 1. ↩

Goldberg, “Controller,” 1-4. ↩

Goldberg, “Controller,” 1. ↩

Hyman Eli Goldberg, “Controller.” US1165663 A, issued December 1915, sheet 3. ↩

For example, Susan Hockey writes that “Father Busa has stories of truckloads of punched cards being transported from one center to another in Italy.” See Susan Hockey, “The History of Humanities Computing” in Companion to Digital Humanities (Oxford: Blackwell Publishing Professional, 2004). ↩

“Electronic Composition in Printing: Proceedings of a Symposium” by the Center of Computer Sciences and Technology, U.S. National Bureau of Standards, 1967: 48. ↩

See Magnetic Recording: The First 100 Years edited by Eric Daniel, Denis Mee, and Mark Clark (New York: Wiley-IEEE Press, 1998); Friedrich Engel, “1888-1988: A Hundred Years of Magnetic Sound Recording,” Journal of the Audio Engineering Society 36, no. 3 (March 1, 1988): 170–78; Valdemar Poulsen, “Method of Recording and Reproducing Sounds or Signals,” patent US661619A, filed July 8, 1899, and issued November 13, 1900; Oberlin Smith, “Some Possible Forms of the Phonograph,” The Electrical World (September 8, 1888): 116–17; Heinz Thiele, “Magnetic Sound Recording in Europe up to 1945,” Journal of the Audio Engineering Society 36, no. 5 (May 1, 1988): 396–408; Bane Vasic and Erozan Kurtas, Coding and Signal Processing for Magnetic Recording Systems (CRC Press, 2004). ↩

Marvin Camras, “Magnetic Recording Tapes,” Transactions of the American Institute of Electrical Engineers 67, no. 1 (January 1948): 505. ↩

Fankhauser, “Telegraphone,” 40. ↩

Fankhauser, “Telegraphone,” 39-40. ↩

Fankhauser, “Telegraphone,” 44. ↩

Fankhauser, “Telegraphone,” 45. ↩

Fankhauser, “Telegraphone,” 41. ↩

R.H. Dee, “Magnetic Tape for Data Storage: An Enduring Technology,” Proceedings of the IEEE 96, no. 11 (November 2008): 1775. ↩

The staff of the Computation Laboratory of Harvard University wrote: “Two means are available for preparing the functional tapes required for the operation of the interpolators. First, when the tabular values of f(x) have been previously published, they may be copied on the keys of the functional tape preparation unit […] and the tape produced by the punches associated with this unit, under manual control. Second, as suitable control tape may be coded directing the calculator to compute the values of f(x) and record them by means of one of the four output punches, mounted on the right wing of the machine” in Description of a Magnetic Drum Calculator (Cambridge, MA: Harvard University Press, 1952). ↩

Staff, Description of a Relay Calculator, 30. ↩

Alan Turing, “Computing Machinery and Intelligence,” Mind 59, no. 236 (October 1, 1950): 444. ↩

Turing, “Computing Machinery and Intelligence,” 444. ↩

Staff, Annals of the Computation Laboratory of Harvard University: Description of a Relay Calculator Vol. XXIV (London, UK: Oxford University Press, 1949): 34-35. ↩

Staff, Description of a Magnetic Drum Calculator, 35 & 143-88. ↩

Daniel Eisenberg, “History of Word Processing” in Encyclopedia of Library and Information Science (New York: Dekker, 1992), 49:268–78. ↩

American Bar Association, “The $10,000 Typewriter,” ABA Journal (May 1966). ↩

Hiroyuki Ohmori, et al., “Memory Element, Method of Manufacturing the Same, and Memory Device, United States Patent Application 20150097254, filed September 4, 2014, and issued April 9, 2015; Carmen-Gabriela Stefanita, Magnetism: Basics and Applications (Springer Science & Business Media, 2012): 1-69. ↩

Recall Wittgenstein’s broken reading machines, which exhibited a similarly recursive problem of verification. To check whether someone understood a message, one has to resort to another message, and so on. ↩

Robert Youngquist and Robert Hanes, “Magnetic Reader,” patent US3013206A, filed August 28, 1958, and issued December 12, 1961: 1. ↩

Youngquist and Hanes, “Magnetic Reader,” 1. ↩

Robert Yongquist and Robert Hanes, “Magnetic Reader,” Patent US3013206, 1961. ↩

J.S. Morgan and J.R. Norwood, “The IBM SELECTRIC Composer: Justification Mechanism,” IBM Journal of Research and Development 12, no. 1 (January 1968): 69. ↩

D.A. Bishop, et al., “Development of the IBM Magnetic Tape SELECTRIC Composer.” IBM Journal of Research and Development 12, no. 5 (September 1968): 380–98. ↩

Douglas Clancy, George Hobgood, and Frederick May, “Data Reading, Recording, and Positioning System,” patent US3530448A, filed January 15, 1968, and issued September 22, 1970: 1. ↩

F.T. May, “IBM Word Processing Developments,” IBM Journal of Research and Development 25, no. 5 (September 1981): 743. ↩

May, “IBM Word Processing Developments,” 743. ↩

May, “IBM Word Processing Developments,” 743. ↩

Bishop et al., “Development,” 382. ↩

Bishop et al., “Development,” 382. See also May, “IBM Word Processing Developments.” ↩

A. Frutiger, “The IBM SELECTRIC Composer: The Evolution of Composition Technology,” IBM Journal of Research and Development 12, no. 1 (January 1968): 10. ↩

See Douglas Engelbart, “Doug Engelbart 1968 Demo,” December 9, 1968 maintained by Stanford University’s Mouse site. The demo is subject to numerous academic studies. See Paul Atkinson, “The Best Laid Plans of Mice and Men: The Computer Mouse in the History of Computing.” Design Issues 23, no. 3 (2007): 46–61; Thierry Bardini, Bootstrapping: Douglas Engelbart, Coevolution and the Origins of Personal Computing (Stanford, CA: Stanford University Press, 2000); Simon Rowberry, “Vladimir Nabokov’s Pale Fire: The Lost ‘Father of All Hypertext Demos’?” in Proceedings of the 22Nd ACM Conference on Hypertext and Hypermedia (2011): 319–324; Chun, “The Enduring Ephemeral,” 85. ↩

Douglas Engelbart, “Human Intellect Augmentation Techniques,” NASA Contractor Report (January 1969): 1. ↩

The source of the cryptic phrase is likely Charles Edward Weller: “We were then in the midst of an exciting political campaign, and it was then for the first time that the well-known sentence was inaugurated—‘Now is the time for all good men to come to the aid of the party’; also the opening sentence of the Declaration of Independence, […] which sentences were repeated many times in order to test the speed of the machine.” See Charles Edward Weller, The Early History of the Typewriter (La Porte, IN: Chase & Shepard, 1918): 21, 30. ↩

Douglas Engelbart, “X-Y Position Indicator for a Display System,” Patent US3541541, 1970. ↩

Weller, Early History of the Typewriter, 1. ↩

Engelbart, “Human Intellect Augmentation Techniques”, 50-51. ↩

Engelbart, “Human Intellect Augmentation Techniques”, 50-51. ↩

Engelbart, “Human Intellect Augmentation Techniques”, 50-51. ↩

Douglas Engelbart and William English, “A Research Center for Augmenting Human Intellect” in Proceedings of the December 9-11, 1968, Fall Joint Computer Conference (1968): 396. ↩

Engelbart, “Human Intellect Augmentation Techniques,” 6. ↩

Engelbart, “Human Intellect Augmentation Techniques,” 6. ↩

Engelbart, “Human Intellect Augmentation Techniques,” 67. ↩

Engelbart, “Human Intellect Augmentation Techniques,” 67. ↩

On simulation see the discussion in Lev Manovich, Software Takes Command (New York; London: Bloomsbury Academic, 2013):55-106 and Laurel Brenda Laurel, Computers as Theatre (Reading, MA: Addison-Wesley, 1991). ↩

Portions of this essay appear in their extended form in Chapter 4 of Plain Text: The Poetics of Computation (Palo Alto, CA: Stanford University Press, 2017). ↩

Article: Author does not grant a Creative Commons License as part of this Agreement.