In 1886 German chemist Clemens Winkler discovered a new atomic element. Situated on the periodic table one level directly below silicon and named after the country of his birth, germanium would come to be widely used in the manufacture of the fiber optic cables that are now an essential part of our global information networks.1 Nearly a hundred years later, Friedrich Kittler wrote about these fiber optic networks as part of his larger project to study media by focusing on the material and technical aspects of their operation – a body of work forming the cornerstone of the materialist approach now widely referred to as German media theory. More recently still, Jussi Parikka outlines the potentials of a “more geologically-oriented notion of depth of media,” and an “excavation into the mineral and raw material basis of technological development,” citing germanium as one of several elements that could be studied in a “materiality of information technology [starting] from the soil.”2 We might thus trace this material, germanium, through a history of ideas beginning with new communication technologies enabled by 19th century scientific development and ending with a focus on the underlying material properties that make these technologies possible.

While germanium itself was an important discovery, it had been predicted nearly a decade before Winkler by another chemist, Dimitry Mendeleev. Consider another historical moment which, when introduced here, puts a slight wrinkle in the above tidy trajectory. While germanium itself was an important discovery, its truly remarkable role in the history of science comes from the fact that it had been predicted nearly a decade before Winkler by another chemist, Dimitry Mendeleev. In being proven to exist almost precisely as Mendeleev predicted, germanium confirmed his hypothesis of the “periodicity” of atomic elements, and cemented the periodic table as an essential organizing principle and epistemological device.3 Winkler very literally filled in a blank, drawn by Mendeleev, in a Foucauldian tabula, which in this case was the periodic table itself. In other words, even at this very “elemental” level, where we might look to find some material bedrock upon which to construct an archaeological media theory, we find still lower levels: observable phenomena determined by combinations of subatomic particles, and the epistemological tools that we use to predict, discuss, and conceptualize them. After all, isn’t every discovery itself also an invention? It is the invention of a name – the practice of turning a thing into an object that we can talk about and situate among other objects. Every discourse network is itself still a discursive formation.

Perhaps nowhere is this more poignantly illustrated than in the case of computer software. Within the much-discussed methodology of “object-oriented programming,” a programmer literally describes some worldly thing as an object within some system of other objects – giving it a name and attributes, enabling it to be related to other objects and operated on by the computer program.4 This essay focuses on software and software-based network technology in order to examine and expand on what it means to do network archaeology. By introducing a software structure known as the stack, I will demonstrate how software is particularly appropriate for an archaeological analysis, but caution that, in the process, we must be careful about conceptualizing these systems as existing beyond the reach of interpretive cultural analysis. An archaeological analysis of the stack illustrates how such software systems are always intensely striated and highly hierarchical, comprised of layers that provide fertile ground for archaeological digging – but at the same time it problematizes the materialist approach, as it reveals how each layer of software systems are always constructed “above” some lower level.

In an essay titled “Media Archaeography,” Wolfgang Ernst writes that media archaeology is “primarily interested in the nondiscursive infrastructure and (hidden) programs of media.”5 This characterization prompts a more formal articulation of the questions introduced above: is it possible to access and identify aspects of media that can truly be considered “nondiscursive”? And to the extent that such an operation is possible, precisely where and how are we to locate such aspects? Ernst goes on to say that “media are not only objects but also subjects of media archaeology,” referring to a kind of non-human agency seen as embedded within certain technical media, particularly software.6 In the context of the present discussion – whether it is truly possible to access nondiscursive layers of media, and what that even might mean – it will be productive to explore how it is that software-based media can be conceptualized as subjects.

Fig. 1: Flow charts illustrating an IF-THEN and IF-THEN-ELSE construct in the Processing programming language.7

One such conceptualization comes from Kittler in the “Typewriter” chapter of Gramophone, Film, Typewriter, in which he attempts to identify what it is that makes computers subjects by focusing on a software concept known as conditional branching. Conditional branching is the construct within a computer program that allows it to alter its flow of instructions based on the result of some other calculation. Kittler focuses on this quite heavily and locates it as the necessary and sufficient condition of a machine subject. But Kittler actually overemphasizes the importance of conditional branching – what he calls the IF-THEN. He writes:

[with conditional branching] information machines bypass humans, their so-called inventors. Computers themselves become subjects. IF a preprogrammed condition is missing, data processing continues according to the conventions of numbered commands, but IF somewhere an intermediate result fulfills the condition, THEN the program itself determines successive commands, that is, its future … Computers operating on IF-THEN commands are therefore machine subjects.8

The IF-THEN was first explored in machine form by Charles Babbage – the 19th century inventor now well known for designing two “engines” that have come to be seen as mechanical precursors to our current digital information technology.9 The first, the Difference Engine, was essentially a complex adding machine designed to exhaustively run the same operation on many inputs, generating large tables that scientists and engineers could use to lookup the value of a needed calculation. The machine was never successfully constructed during his lifetime, but after completing plans for it, Babbage began to think more generally about how to create a more powerful machine that could essentially be programmed to solve a wider range of problems. Babbage never managed to complete the design for what came to be called the Analytical Engine, but from what we know, it would have had some facility similar to IF-THEN conditional branching. Babbage’s work seems to lend credence to the idea, as Babbage must have come to believe, that in order to move from a fixed, pre-programmed machine to a generalized, programmable one, something like a conditional IF-THEN would be an invaluable component.

Indeed, Kittler’s argument for the primacy of the IF-THEN is a compelling one. If we conceptualize conditional branching as he argues – as somehow essential to a machine subject – this enables us to understand the IF-THEN in a way that satisfies Ernst: as a kind of naturalized or essential precondition of any computational media, or in other words, a nondiscursive structure that exists outside of or before the technical object. But does this understanding of conditional branching make sense?

In response to this question, consider a somewhat speculative counter-example: the simple mouse trap. Like Babbage’s engines, the basic spring-loaded mouse trap was a product of the 19th century mechanical era – a patent for it was granted several decades after Babbage first conceived of the Analytical Engine.10 In a way, we can see this as the most basic mechanical IF-THEN device. The “programmer” sets up the machine, effectively programming a simple algorithm: if the mouse touches the bait, then close the trap.11 While we would not consider this a true programmable system, the point is that a simple, mechanical circuit between some input and an IF-THEN response does not necessarily create a system that could be thought of as a machine subject.

And so, if the IF-THEN is not the necessary and sufficient condition of a machine subject, is it possible to identify some other structure that is? In the 1930s Alan Turing famously formalized his Universal Turing Machine. Using this notion of conditional branching along other concepts from mathematics, logic and set theory, Turing created an abstract model of a calculating machine which he formally proved could compute any process that any other machine could – a theoretical machine that was in fact “universal.”12 This formal proof is known as the Church-Turing Thesis. The other name here refers to Alonzo Church, who had, independently of Turing, devised his own theoretical machine model that he called the Lambda Calculus. In proving this notion of universality, it was also proven that these two fundamentally different models of computation were equivalent – which is to say, they were equally powerful. One key difference though between the two models is that the Lambda Calculus does not have an explicit notion of the IF-THEN conditional branch. Instead, Church’s model is based purely on the ability to define functions (subroutines) and for these functions to be able to call each other in an arbitrarily nested or recursive way. As it turns out, this capacity for a computational process to be composed of definable functions that are able to call each other is sufficient: using only this it is possible to implement conditional branching. It is possible to demonstrate this principle using many functional programming languages – for example LISP, which was designed around principles from the Lambda Calculus.13 So in one sense, we can see a kind of a priori even to the IF-THEN.

Media archaeology is often said to be interested in “prehistories,” and in the aforementioned essay, Ernst implores us to understand this not as temporally prior, but rather as technical precondition.14 The Lambda Calculus satisfies each of these aspects: it can be understood as both technically prior and temporally prior, as Church published on the topic slightly before Turing published on his universal machine. However, the present goal is not to argue about which of these models is somehow more essential to computational media. Rather, the purpose is to challenge the primacy of a concept assumed to be inherent to computation itself, revealing that even something as basic as a logical IF-THEN can be seen as constructed within some discursive framework and reducible to some other lower level phenomenon. Additionally, it is productive to explore what can be learned about software and symbolic computation by provisionally considering this other structure as a possible fundamental building block.

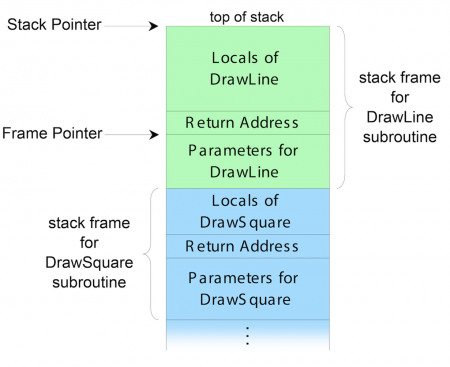

Within actual programming languages (and within the underlying machine hardware on which such programming languages are implemented), the recursively callable functions as described by the Lambda Calculus are implemented using what is known as the function call stack. Using this structure, the computer stores the state of the current function being evaluated, and if that function calls another function, it will “push” everything down and repeat, so that when the latter function returns, it will “pop” off the top and return back to the prior function. Figure 2 illustrates an example of this behavior. Imagine a program that was drawing a square on the screen. The algorithm for this might be to implement a subroutine to draw a line, and then to call that subroutine four times. The diagram shows a snapshot of the stack when the DrawSquare subroutine has just called the DrawLine subroutine. If DrawLine now called something else in turn, everything would be pushed down again. A key concept illustrated here is the way in which computational processes are always composed of multiple frames of reference, or abstraction layers. The stack shows how these are managed within the machine. The direction of the stack’s vertical order is not important (on some systems the stack “grows up,” as here; on other systems it “grows down”). But the stack shows how “higher level” functions (in this case DrawSquare) persist over a duration in which many “lower level” functions may execute and complete.15 An important guiding principle about this type of abstraction within the field of computer science is that higher level functions be designed in a way that is agnostic about the implementation details of lower levels. So in the present example, if the DrawLine function were modified in some way (perhaps to draw two shorter lines attached at a slight angle) then the DrawSquare function should still operate without any other modifications (but would then in this case create a kind of octagon shape instead of a simple square).

Fig. 2: A snapshot of a hypothetical function call stack.16

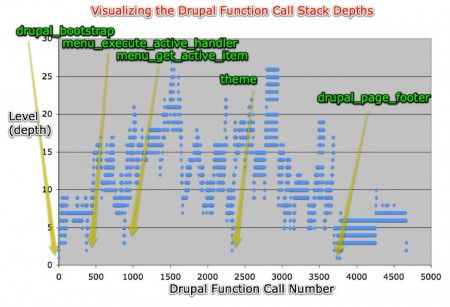

This function call stack is illustrated with a concrete example in Figure 3. The chart pictured here is a visualization of the execution of an actual computer program – in this case a popular open source content management system (a web tool that allows non-technical users to manage complex websites) although the same principles apply to nearly any program.17 The computational task illustrated here is the generation of one webpage within this system. This visualization was generated using Xdebug: a diagnostic tool for the PHP programming language that, among other things, can actually record every function call of a program during some timeframe and export that data to be plotted in a separate charting tool.18 On the lower left of the chart is the first function that gets called. Time is on the horizontal axis and advances to the right, and every vertical uptick is a nested function call. The graph illustrates the way in which the size of the call stack increases and decreases as functions call other functions and then return themselves. This graphic shows that there are approximately 5000 function calls total, and in practice this would probably take a few tenths of a second.19 Visualized in this way, the stack demonstrates the manner in which various abstraction levels operate at vastly different and nearly incompatible time scales: a real world example of the concept of micro-temporalities, discussed elsewhere in this issue. Higher level functions may be responding to user input or creating an animation visible to the human eye, while lower level functions carry out inhuman amounts of computation in miniscule intervals.

Fig. 3: The function call stack illustrated for the generation of one web page within the Drupal content management system.20

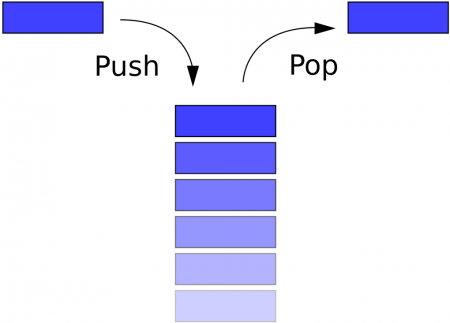

In addition to the function call stack, within the field of computer science, the basic principles of the stack have been generalized into structures that are studied in and of themselves. Perhaps most fundamentally, the stack is studied as a construct known as a data structure: a kind of generic schema or diagram for storing data in a way that is useful in many different contexts and for many different algorithms.21 The main operations on a stack are known as push and pop, and the primary feature of a stack is what is known as “last in, first out”: the most recent item to have been added is the first that gets removed. This set of operations is essential to the archaeological approach. Archaeology proper takes the earth as its stack – newer objects are closer to the surface, and the archaeologist digs deeper to access and excavate prior historical periods – and in media archaeology we begin with our present techno-media circumstance and look for forgotten precedents, alternative histories, etc.

Fig. 4: The operations of the stack.22

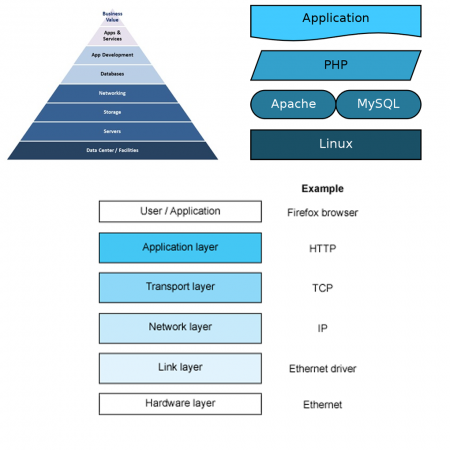

Even beyond these purely technical examples, the stack pops up in many other contexts. Stack-like structures are used to conceptualize technological systems in numerous ways. Figure 5 shows several of these. The first is a general diagram revealing the architecture of some software application – often called an “application stack.” This type of illustration is widely used to explain the pieces of a complex software system to a non-technical audience. While this type of stack is technically different from the function call stack introduced above, both diagrams embody a similar approach to representing the recursively nested abstraction layers of technical systems. At the top of the diagram are always terms like “value,” “business,” “users,” etc.; and at the bottom are terms standing in for “low level” infrastructure such as “hardware,” “electricity,” and so on. It is in this sense that the stack or stack-like concepts are perhaps most commonly referenced in media theory; for example, in Expressive Processing, Noah Wardrip-Fruin refers to “the common metaphoric presentation of computing as a tower of abstractions – computing platform, software, and display/interface” which, essentially, is another way of describing the application stack as represented here.23 The next diagram is one particular application stack that is widely used today, comprised of all open source technologies and known as the LAMP stack. At the bottom we see Linux, an operating system; then Apache and MySQL, a database and webserver; next is PHP, the programming language; and finally, the application itself, seen to sit “on top” of all these other systems. The last diagram is an example of the network stack, often referred to as the “OSI model.”24 This stack is ubiquitous within discussions of information technology networks, and is mentioned by Alexander Galloway in Protocol: How Control Exists after Decentralization, where, appropriating a concept from Marshall McLuhan, he writes that “the content of every new protocol is always another protocol.”25 The stack provides a new perspective on this concept of protocol nesting, and we can see the stack itself as a generalization of this concept from the network context.

Fig. 5: (a) A general application stack, (b) the LAMP stack, comprised of Linux, Apache, MySQL and PHP, (c) a network protocol stack.262728

Here, I admit that I am conceptually conflating the stack as an operative structure that exists materially within the program code of software systems, and the stack as a class of diagrams used to explain both these operative structures and software systems more generally. By purposefully blurring the distinction between them, I aim to speak about both of these aspects simultaneously and generate new conceptual insights from their mutual interpenetration. On one hand, the operative stack always exists in time, within a functioning machine, implying that the diagrammatic stack then is always a kind of snapshot of this. These diagrams are in turn used to create knowledge about software systems. Conversely, the diagrammatic stack is a spatialization of computational processes that allows us to “see” the way that these processes are implemented in a material system, at scales otherwise inaccessible to us.

Bringing these two aspects together – the operative and the diagrammatic – is an approach given precedent by many theorists, perhaps most notably Bruno Latour in an essay titled “Visualisation and Cognition: Drawing Things Together.” In it, Latour seeks to construct a methodology that could be used “to define what is specific to our modern scientific culture … to find the most economical explanation of its origins and special characteristics.”29 The approach that Latour develops in response to this goal is focused on the importance of “inscription devices”: the term he gives for various methods of visualizing concepts within a field of knowledge, or what he describes in other terms as “the [ways] in which groups of people argue with one another using paper, signs, prints and diagrams.”30 Incidentally, as an example of this, Latour even cites Dmitry Mendeleev and the periodic table mentioned above, claiming: “Chemistry becomes powerful only when a visual vocabulary is invented that replaces the manipulations [of substances] by calculation of formulas,” only when “all the substances can be written in a homogeneous language where everything is simultaneously presented to the eye.”31 However, Latour is careful not to rely on inscription devices alone as the sole causal mechanism in his investigation (“an exclusive interest in visualization and writing falls short, and can even be counterproductive”) and it is this aspect of his argument that is the most powerful.32 He elaborates: “writing and imaging cannot by themselves explain the changes in our scientific societies … Rather, we should concentrate on those aspects that help in the mustering, the presentation, the increase, the effective alignment or ensuring the fidelity of new allies.”33 In other words, in addition to the importance of diagrams and visualization, it is essential to also consider the entire networks of mechanisms that enable these visualizations to have influence on the world. “No innovation in the way longitude and latitudes are calculated, clocks are built, log books are compiled, copperplates are printed, would make any difference whatsoever” if not for “commercial interests, capitalist spirit, imperialism, thirst for knowledge,” etc.34 Studying the diagrammatic stack without considering its technical counterpart misses the authority lent to the visualization by the strong connection to its material basis, and ignores the technical insights that the diagram provides. Similarly, only studying the operative aspects of the stack in computational machinery ignores the way that this has co-evolved with a mode of visualization and knowledge creation so closely tied to the recursively nested abstraction layers within the machine. The stack then – in both senses – demonstrates the mechanism through which processes and data are captured and enclosed, made into the operable fodder of computational media.

This conceptual conflation can even be considered stack-like itself: a layering of distinct aspects that allows the result to be studied as one cohesive phenomenon, while nonetheless holding in one’s mind the discrete layers at different scales which can studied individually. In the introduction to The Archaeology of Knowledge, Foucault describes the archaeological approach to history, writing that “the problem now is to constitute series: to define the elements proper to each series … hence the ever-increasing number of strata, and the need to distinguish them, the specificity of their time and chronologies; hence the need to distinguish not only important events … but types of events at quite different levels (some very brief, other of average duration …); hence the possibility of revealing series with widely spaced intervals formed by rare or repetitive events.”35 In the context of computational media, with its vastly disparate and often seemingly inhuman scales of time and space, identifying these events and arranging them in series is certainly challenging. It seems clear at present that software should be considered a political and historical actor – but this only raises a difficult question: What is a software event? (At what time scale should it be considered? Where should it be said to occur? And who or what exactly should be considered the subject enacting this event?) This question will simply be posed here and left open for future work, but an archaeology of the stack provides a kind of methodological vector pointing to how one might approach such an investigation. In this case we can see the stack functioning as a kind of “bridge” between these vastly different scales of the human and the machine. As Latour writes, this diagram-oriented method of analysis is particularly powerful when “the phenomena we are asked to believe are invisible to the naked eye; quasars, chromosomes, brain peptides, leptons, gross national products, classes, coast lines [or, I would add, computational processes] never seen but through the ‘clothed’ eye of inscription devices.”36

Is it possible and productive to extend and apply the stack beyond just the context of computational media? As a model of such an application, consider the notion of the apparatus as developed by Michel Foucault, a concept he also referred to as the dispositif.37 While Foucault never provided a specific definition of the dispositif, he did offer a thorough description in a 1977 interview which, as quoted by Giorgio Agamben in his essay “What is an Apparatus?” identifies three main features of the concept:

What I’m trying to single out with this term is, first and foremost, a thoroughly heterogeneous set consisting of discourses, institutions, architectural forms, regulatory decisions, laws, administrative measures, scientific statements, philosophical, moral, and philanthropic propositions … [Second] the apparatus is thus always inscribed into a play of power, but it is also always linked to certain limits of knowledge that arise from it and, to an equal degree, condition it … [And finally] the apparatus is precisely this: a set of strategies of the relations of forces supporting, and supported by, certain types of knowledge.38

In order to show how the stack might function as dispositif, I will establish such a heterogenous set of contexts.

Thus far, I have explored the stack as a way that function calls are implemented in most programming languages; a push-pop data structure frequently deployed within software algorithms; the means by which that protocol is implemented in networked systems; and a diagram of nested layers of abstraction that shapes the formation of knowledge about these systems. Beginning to extend this beyond just these technological contexts, consider a passage by Galloway from his pamphlet series “French Theory Today”:

What is the infrastructure of today’s mode of production? It includes all the classical categories, such as fixed and variable capital. But there is something that makes today’s mode of production distinct from all the others: the prevalence of software. The economy today is not only driven by software [but] in many cases this economy is software, in that it consists of the extraction of value based on the encoding and processing of mathematical information.”39

If the economy is software, then certainly considering various aspects of how software functions is an excellent way to provide new insights about the function of the economy. Extrapolating aspects from the stack in this domain, we could think for example of the highly abstracted and stratified modes of production and labor in our globalized economy, and the ways that these are oriented along different scales. We might then be able to see how the places and moments where these strata come into contact with each other are controlled interfaces designed to allow flows in highly specified ways. As another example, we can take urban communication infrastructure, as discussed by Shannon Mattern elsewhere in this issue. In this domain we can consider the notion, referenced there, of path dependency, drawing compelling connections between this and the nested levels of temporal and technical precedent of the stack. Doing so reveals similarities between these two concepts and opens up the potential for new insights about the historical and technological processes of development of urban media.

The work of Benjamin Bratton, notably his forthcoming book titled The Stack: On Software and Sovereignty, provides another opportunity to construct this heterogeneous set of contexts for the stack as dispositif. In a talk from November 2011, Bratton outlines the key arguments from that monograph, opening with the question: “In an age of planetary-scale computation, what is the future of sovereign geography?” Bratton describes his stack as a

vast software/hardware formation, a proto-megastructure of both bits and atoms, literally circumscribing the planet, which … not only perforates and distorts Westphalian models of State territory [but] also produces new spaces in its own image: clouds, networks, zones, social graphs, ecologies, megacities, formal and informal violences, weird theologies, each superimposed one on the other.40

And while he does claim that it is “important to understand the Stack as an abstract model and as a real technical machine,” it is unclear to what extent the layers cited are organized in the same way as the software stack above, strictly speaking, or whether the agglomeration of components that he identifies might be better served by some other diagrammatic structure:

social, human and “analog” layers (chthonic energy sources, gestures, affects, user-actants, interfaces, cities and streets, rooms and buildings, organic and inorganic envelopes) and informational, non-human computational and “digital” layers (multiplexed fiber optic cables, datacenters, databases, data standards and protocols, urban-scale networks, embedded systems, universal addressing tables).41

Regardless, this work opens up the potential for the application of the stack to questions of geopolitics and global governance as they have been influenced by media such as “cloud computing, ubiquitous computing and augmented reality/active interfaces.42

Considering the stack as dispositif might provide insights to how this structure influences power and knowledge in an age dominated by software and computational media. The lines between various layers of the stack are often referred to as interfaces. Take for example the top level of an application stack with the user “above”: the top line in this case would be the user interface. Within lower levels, the separation between nested abstraction layers is often called an API, for “application program interface.” An API is a set of commands that a programmer is able to use when implementing a given system (“application”) on top of some lower level – it is the group of facilities provided by a lower level system to a higher level one. What this reveals then is a kind of paradoxical arrangement: so-called higher level systems create a certain indeterminacy in software, opening up possibilities for new types of free creative action; but each of these high-level layers are always already constrained and preconditioned by the lower level systems and infrastructures upon which they are implemented. And so, if it is true that “media determine our situation,”43 then the stack serves as a diagrammatic that illustrates how this is so. If media determine our situation, then still lower level media infrastructures and technological circumstances always determine those media in turn.

Returning then finally to the original question – to what extent it is possible and productive to identify and analyze “nondiscursive” or “subsemantic” layers of culture – considering this in light of the stack model provides some new insights. The stack unfolds a given software system into multiple nested layers, and as such, provides fertile ground for where to look for these nondiscursive aspects. For a given software application or medium, we may want to look to lower levels for the nondiscursive structures that determine them: data processing algorithms, physical hardware, or even the way data is represented on a physical machine as binary 1’s and 0’s. However, in light of the stack, it becomes clear how each layer is simultaneously both discursive and nondiscursive. In a way, each layer of the stack serves a nondiscursive role to those above, and yet, a discursive role in relation to those below.

As a contrived example, consider the digital image. An analysis that attempts to study the digital image by focusing on underlying technical layers might initially encounter the raw pixel color data that comprise each image and consider the pixel as a kind of nondiscursive atomic unit from which to draw conclusions about the capabilities of the digital image. However, looking down further still, one might encounter the raw binary ones and zeros that comprise each pixel’s data – and from this perspective, that pixel itself, with its reds, greens and blues, would be considered the contained meaning. So while the pixel can be conceptualized as the technical basis for the meaning contained in the image, from a still lower level, the pixel is the meaning contained within the lower level system. Of course, this should not imply that it is not productive to study the image by way of the pixel! The technical affordances of the pixel can reveal much about the way the digital image functions. But a stack-based approach should foreground the pixel’s historical contingency over some sense of technical inevitability, and should inform a praxis that recognizes the possibility of refiguring the pixel in radical new ways.

There is one problem with this argument as it has been articulated thus far. The line of reasoning presented here could be understood as implying that the stack is somehow necessarily inherent to software or computational media. The fallacy here is that this would then position the stack itself as a kind of nondiscursive structure that all software systems must necessarily employ. Of course, this is not true. The stack too is itself a discursive object – created within the discipline of computer science, with its own history of development. While many early pioneers in the development of computing machinery employed stack-like structures, perhaps the first formal articulation of the stack as a subject of study comes from German computer scientist Freidrich Bauer. Describing his 1951 discovery, he writes: “it amounts to stacking the intermediate results in reverse order of their later use; that is, the result pushed in last is taken out first.”44 Working with colleague Klaus Samelson, Bauer actually originally named this construct the “cellar.” He recounts the process of discovery:

When I explained the wiring scheme to Samelson, I drew a sketch on paper. The repetitions of the basic pattern forced me to fill the page to the very bottom, and still I was not finished. This caused Samelson to tease me: “Where will you put the remaining wires now?” and I answered “into the cellar.” This is how the name cellar for our stack jokingly came into existence.45

Bauer goes on to state that in articulating the stack structure, he had been strongly influenced by another German computer scientist, Heinz Rutishauer. Bauer cites a paper in which Rutishauer explains a computational process for evaluating arbitrary algebraic expressions – numbers, arithmetic operations and parentheses. Rutishauer describes this process as generating a “Klammergebirge,” which roughly translates to “parenthesis mountain range” – and it is precisely this “mountain range” that we still see today, for example as illustrated in figure 3 above.46 Seeing the stack as a historically contingent object serves to remind us that it was created within a discursive framework, and allows us to imagine other alternatives, and to consider the different models of computation that those might enable. The stack is a fundamental structure of computational media, but it is also an arbitrary structure and a cultural object. Recognizing this, we might imagine other fundamental models of computational media.

A second concern with this method of analysis is that a stack-based approach could be seen as possessing a bias toward a teleological perspective: as if to imply that the media technology systems of our present circumstance are the natural or inevitable result of some purposeful procession of technological progress. There are many critiques to such a way of thinking, perhaps most notably from Sigfried Zielinski, who in the aforementioned text advocates for his “anarchaeological” approach to media archaeology. He writes that “the notion of continuous progress from lower to higher, from simple to complex, must be abandoned, together with all the images, metaphors, and iconography that have been – and still are – used to describe progress.”47 While it is true that the stack can serve to illustrate processes of development from low to high, it is important to keep in mind that what it shows is always one particular configuration, not a historically necessary pathway – in effect, a kind of arbitrary teleology, if such a term makes sense. In fact, by unfolding the various nested layers of abstraction within a technical system, an archaeology of the stack can readily point to insights critical of technological progress.

For example, in the 1990s, Sun Microsystems introduced Java, advertised to technologists as a “write once, run anywhere” programming language.48 The promise was that Sun had developed a technology that would free programmers from concerns about idiosyncratic differences between various hardware systems – instead of rewriting code to account for subtle differences between the APIs of different low level systems, a programmer could write one standardized Java code that would run on all hardware systems. The way this worked in practice was that Sun developed a “virtual machine” and implemented versions of this above the actual physical machine for various hardware platforms. This functioned as a new layer of the stack upon which programmers were to create applications. An analysis of the stack in this example highlights how its technological development was a typical market-driven process of standardization: an attempt to eliminate eccentricity and variance in support of a commercial business interest.

Despite the potential conceptual pitfalls, a stack-oriented approach to the study of software remains a valuable one, if for no other reason than that stack structures exist empirically within present software systems at a fundamental level – they are a ubiquitous data structure and a mode by which recursive function calls are implemented. Beyond the purely empirical level, it is productive to identify and consider the various ways that the stack is expressed through higher level systems, affecting how software-based systems and other dependent media are organized and conceptualized as well other related domains such as infrastructure and economics. In one sense, the argument outlined above could be understood as positioning a stack-based analysis in opposition to the materialist approach of German media theory. By inducing a mode of thinking always readily able to see another lower level, the stack might imply that the “nondiscursive” or “subsemantic” layers that the materialist impulse seeks simply do not truly exist. However, my analysis here reveals how the stack is actually complementary and beneficial to the materialist approach. By highlighting the interfaces between layers of media systems and the existence of lower level technological dependencies, the stack provides a methodology that instructs where to search for such non-human technical substrates, while also challenging materialist approaches to always consider such layers as operating in relation to the human, cultural sphere. Indeed, by drawing out a stratigraphy of abstraction – by unfolding the various strata of nested abstraction layers that comprise software and other media systems – and by pointing us toward lower level systems and “hidden programs of media,” while simultaneously challenging the impulse to naturalize or essentialize these lower levels, the stack is an invaluable tool of network archaeology.

John Emsley, Nature’s Building Blocks: An A-Z Guide to the Elements (Oxford: Oxford University Press, 2011), 162. ↩

Jussi Parikka, “A Call for An Alternative Deep Time of the Media,” Machinology, September 28, 2012, accessed December 17, 2012, http://jussiparikka.net/2012/09/28/a-call-for-an-alternative-deep-time-of-the-media/. ↩

Emsley, Nature’s Building Blocks, 162. ↩

Casey Alt, “Objects of Our Affection: How Object Orientation Made Computers a Medium,” in Media Archaeology: Approaches, Applications, and Implications, ed. Erkki Huhtamo and Jussi Parikka (Berkeley: University of California Press, 2011), 278-300. ↩

Wolfgang Ernst, “Media Archaeography”, in Media Archaeology: Approaches, Applications, and Implications, ed. Erkki Huhtamo and Jussi Parikka (Berkeley: University of California Press, 2011), 242. ↩

Ernst, “Media Archaeography,” 241. ↩

Casey Reas and Ben Fry, Getting Started with Processing (Sebastopol: O’Reilly, 2010), 64. ↩

Friedrich A. Kittler, Gramophone, Film, Typewriter (Stanford: Stanford University Press, 1999), 258-259. ↩

“The Babbage Engine,” Computer History Museum, accessed December 30, 2012, http://www.computerhistory.org/babbage/. ↩

Stephen van Dulken, Inventing the 19th Century: 100 Inventions That Shaped the Victorian Age from Aspirin to the Zeppelin (New York: New York University Press, 2001), 128. ↩

In a way, we can see the mouse trap as a homology (to borrow a concept from Deleuze and Guattari) to the neuro-motor circuit of the mouse. Perhaps we could actually see every trap as a homology with the animal it targets. This provides an interesting perspective then for understanding artificial intelligence systems: always trying to capture and enclose some aspect of what we understand to be an essential aspect of some subject. ↩

Martin Davis, Engines of Logic: Mathematicians & the Origin of the Computer (New York: Norton, 2001), 165-166. ↩

Implementing conditional branching in LISP without using an explicit IF-THEN programming language structure was originally explained and demonstrated to me by Brian Harvey, Senior Lecturer Emeritus of Computer Science at UC Berkeley, in conversation on November 19, 2011. ↩

Ernst, “Media Archaeography,” 246. ↩

The usage of “duration” here is a very loose but intentional reference to the term as introduced by Henri Bergson in Matter and Memory. Future work in this area could explore possible additional connections between concepts discussed here and that work. For example, is it productive to consider Bergson’s “cone of memory,” organized from present action to pure memory, as a kind of stack-like structure, organized from low level to high? ↩

“Call stack,” Wikipedia, accessed December 30, 2012, http://en.wikipedia.org/wiki/Call_stack. ↩

The particular CMS shown here is Drupal, which is implemented using the PHP programming language. Other similar systems are WordPress or Joomla. ↩

More information about the Xdebug tool is available on the project homepage, available at http://xdebug.org/. ↩

Benjamin Melancon et al., The Definitive Guide to Drupal 7 (New York: Apress, 2011), 918. ↩

“Visualizing Drupal Function Call Stack Depth,” Flickr, accessed December 30, 2012, http://www.flickr.com/photos/kentbye/1108244604. ↩

Sartaj Sahni and Dinesh P. Mehta, Handbook of Data Structures and Applications (Boca Raton: Chapman & Hall/CRC, 2005), 2-12 – 2-14. ↩

“Stack (abstract data type),” Wikipedia, accessed December 30, 2012, http://en.wikipedia.org/wiki/Stack_(abstract_data_type). ↩

Noah Wardrip-Fruin, Expressive Processing: Digital Fictions, Computer Games, and Software Studies (Cambridge: MIT Press, 2009), 167. ↩

“RFC 3439: Some Internet Architectural Guidelines and Philosophy,” The Internet Engineering Task Force, accessed August 7, 2013, http://www.ietf.org/rfc/rfc3439.txt. ↩

Alexander R. Galloway, Protocol: How Control Exists After Decentralization (Cambridge: MIT Press, 2004), 10-11. ↩

“Aren’t Virtualization and Cloud the Same Thing?” InterConnections, accessed December 30, 2012, http://blog.equinix.com/2011/11/aren%E2%80%99t-virtualization-and-cloud-the-same-thing/. ↩

“The LAMP Stack,” PHP.net, accessed December 30, 2012, http://talks.php.net/show/lamp-ikt-grenland/1. ↩

“Anatomy of the Linux networking stack,” IBM Corporation, accessed December 30, 2012, http://www.ibm.com/developerworks/linux/library/l-linux-networking-stack/. ↩

Bruno Latour, “Visualization and Cognition: Drawing Things Together,” in Knowledge and Society: Studies in the Sociology of Culture Past and Present, ed. Henrika Kuklick and Elizabeth Long (Greenwich: JAI Press), 1. ↩

Latour, “Visualization and Cognition,” 3. ↩

Latour, “Visualization and Cognition,” 13. ↩

Latour, “Visualization and Cognition,” 6. ↩

Latour, “Visualization and Cognition,” 5. ↩

Latour, “Visualization and Cognition,” 6. ↩

Michel Foucault, The Archaeology of Knowledge; And The Discourse on Language (New York: Pantheon Books, 1972), 7-8. ↩

Latour, “Visualization and Cognition,” 17. ↩

Others have written about possible connections between methodologies of Latour and the dispositif, however in this case a more direct connection will be explored. Simon Ganahl, “Ist Foucaults ‘dispositif’ ein Akteur-Netzwerk?” Foucaultblog, April 1, 2013, accessed August 7, 2013, http://www.fsw.uzh.ch/foucaultblog/blog/9/ist-foucaults-dispositif-ein-akteur-netzwerk. ↩

Michel Foucault, Power/Knowledge: Selected Interviews and Other Writings, 1972-1977 (New York: Pantheon Books, 1980), 194. ↩

Alexander R. Galloway, “Quentin Meillassoux, or The Great Outdoors,” in French Theory Today – An Introduction to Possible Futures (Brooklyn: Public School New York/Erudio Editions, 2011), 10. ↩

Benjamin Bratton, “On the Nomos of the Cloud: The Stack, Deep Address, Integral Geography” (paper presented at Berlage Institute, Rotterdam, November 2011). Available online, accessed August 7, 2013, http://www.bratton.info/projects/talks/on-the-nomos-of-the-cloud-the-stack-deep-address-integral-geography/. ↩

Bratton, “On the Nomos of the Cloud.” ↩

Bratton, “On the Nomos of the Cloud.” ↩

Kittler, Gramophone, Film, Typewriter, xxxix. ↩

F. L. Bauer, “Cellar Principle of State Transition,” Annals of the History of Computing 12 (1990): 42. ↩

Bauer, “Cellar Principle of State Transition,” 43. ↩

Bauer, “Cellar Principle of State Transition,” 43. ↩

Sigfried Zielinski, “Introduction: The Idea of a Deep Time of the Media,” in Deep Time of the Media: Toward an Archaeology of Hearing and Seeing by Technical Means (Cambridge: MIT Press, 2006), 5. ↩

“Write once, run anywhere?” ComputerWeekly.com, accessed January 17, 2012, http://www.computerweekly.com/feature/Write-once-run-anywhere. ↩

Article: Creative Commons Attribution-NonCommercial-ShareAlike 3.0 Unported License

http://creativecommons.org/licenses/by-nc-sa/3.0/

Image: "Introduction: Paragraph 22”

From: "Drawings from A Thousand Plateaus"

Original Artist: Marc Ngui

https://amodern.net/artist-profile-marc-ngui/

Copyright: Marc Ngui